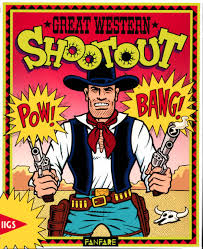

Performance Shootout of Nearest Neighbours: Contestants

Continuing the benchmark of libraries for nearest-neighbour similarity search, part 2. What is the best software out there for similarity search in high dimensional vector spaces?

Document Similarity @ English Wikipedia

Read more on Performance Shootout of Nearest Neighbours: Contestants…